chemlab.graphics¶

This package contains the features related to the graphic capabilities of chemlab.

Ready to use functions¶

The two following functions are a convenient way to quickly display and animate a System in chemlab.

- chemlab.graphics.display_system(sys, style='vdw')¶

Display the system sys with the default viewer.

- chemlab.graphics.display_trajectory(sys, times, coords_list, style='spheres')¶

Display the the system sys and instrument the trajectory viewer with frames information.

Parameters

- sys: System

- The system to be displayed

- times: np.ndarray(NFRAMES, dtype=float)

- The time corresponding to each frame. This is used only for feedback reasons.

- coords_list: list of np.ndarray((NFRAMES, 3), dtype=float)

- Atomic coordinates at each frame.

Builtin 3D viewers¶

The QtViewer class¶

- class chemlab.graphics.QtViewer¶

Bases: PySide.QtGui.QMainWindow

View objects in space.

This class can be used to build your own visualization routines by attaching renderers and uis to it.

See also

Example

In this example we can draw 3 blue dots and some overlay text:

from chemlab.graphics import QtViewer from chemlab.graphics.renderers import PointRenderer from chemlab.graphics.uis import TextUI vertices = [[0.0, 0.0, 0.0], [0.0, 1.0, 0.0], [2.0, 0.0, 0.0]] blue = (0, 255, 255, 255) colors = [blue,] * 3 v = QtViewer() pr = v.add_renderer(PointRenderer, vertices, colors) tu = v.add_ui(TextUI, 100, 100, 'Hello, world!') v.run()

- add_post_processing(klass, *args, **kwargs)¶

Add a post processing effect to the current scene.

The usage is as following:

from chemlab.graphics import QtViewer from chemlab.graphics.postprocessing import SSAOEffect v = QtViewer() effect = v.add_post_processing(SSAOEffect)

See also

Return

an instance of AbstractEffect

New in version 0.3.

- add_renderer(klass, *args, **kwargs)¶

Add a renderer to the current scene.

Parameter

- klass: renderer class

- The renderer class to be added

- args, kwargs:

- Arguments used by the renderer constructor, except for the widget argument.

See also

See also

Return

The istantiated renderer. You should keep the return value to be able to update the renderer at run-time.

- add_ui(klass, *args, **kwargs)¶

Add an UI element for the current scene. The approach is the same as renderers.

Warning

The UI api is not yet finalized

- remove_post_processing(pp)¶

Remove a post processing effect.

..versionadded:: 0.3

- remove_renderer(rend)¶

Remove a renderer from the current view.

Example

rend = v.add_renderer(AtomRenderer) v.remove_renderer(rend)

New in version 0.3.

- run()¶

Display the QtViewer

- schedule(callback, timeout=100)¶

Schedule a function to be called repeated time.

This method can be used to perform animations.

Example

This is a typical way to perform an animation, just:

from chemlab.graphics import QtViewer from chemlab.graphics.renderers import SphereRenderer v = QtViewer() sr = v.add_renderer(SphereRenderer, centers, radii, colors) def update(): # calculate new_positions sr.update_positions(new_positions) v.widget.repaint() v.schedule(update) v.run()

Note

remember to call QtViewer.widget.repaint() each once you want to update the display.

Parameters

- callback: function()

- A function that takes no arguments that will be called at intervals.

- timeout: int

- Time in milliseconds between calls of the callback function.

Returns a QTimer, to stop the animation you can use Qtimer.stop

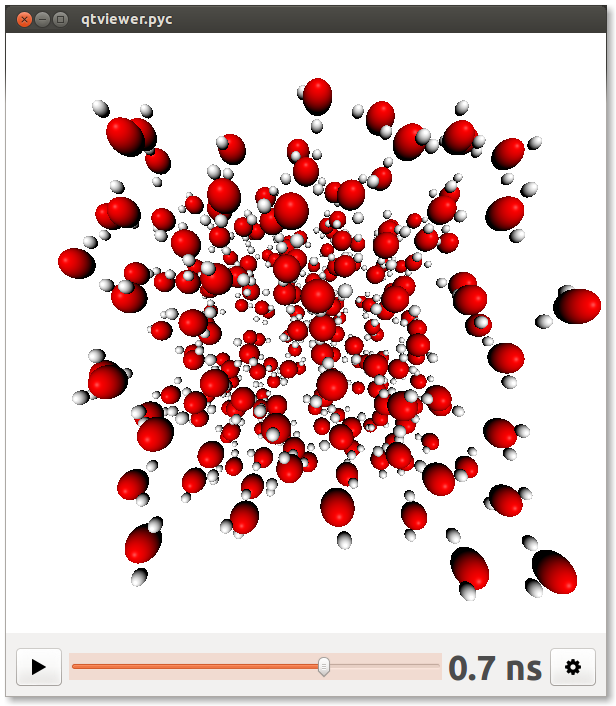

The QtTrajectoryViewer class¶

- class chemlab.graphics.QtTrajectoryViewer¶

Bases: PySide.QtGui.QMainWindow

Interface for viewing trajectory.

It provides interface elements to play/pause and set the speed of the animation.

Example

To set up a QtTrajectoryViewer you have to add renderers to the scene, set the number of frames present in the animation by calling ;py:meth:~chemlab.graphics.QtTrajectoryViewer.set_ticks and define an update function.

Below is an example taken from the function chemlab.graphics.display_trajectory():

from chemlab.graphics import QtTrajectoryViewer # sys = some System # coords_list = some list of atomic coordinates v = QtTrajectoryViewer() sr = v.add_renderer(AtomRenderer, sys.r_array, sys.type_array, backend='impostors') br = v.add_renderer(BoxRenderer, sys.box_vectors) v.set_ticks(len(coords_list)) @v.update_function def on_update(index): sr.update_positions(coords_list[index]) br.update(sys.box_vectors) v.set_text(format_time(times[index])) v.widget.repaint() v.run()

Warning

Use with caution, the API for this element is not fully stabilized and may be subject to change.

- add_renderer(klass, *args, **kwargs)¶

The behaviour of this function is the same as chemlab.graphics.QtViewer.add_renderer().

- add_ui(klass, *args, **kwargs)¶

Add an UI element for the current scene. The approach is the same as renderers.

Warning

The UI api is not yet finalized

- set_text(text)¶

Update the time indicator in the interface.

- set_ticks(number)¶

Set the number of frames to animate.

- update_function(func)¶

Set the function to be called when it’s time to display a frame.

func should be a function that takes one integer argument that represents the frame that has to be played:

def func(index): # Update the renderers to match the # current animation index

Renderers and UIs¶

Post Processing Effects¶

Low level widgets¶

The QChemlabWidget class¶

This is the molecular viewer widget used by chemlab.

- class chemlab.graphics.QChemlabWidget(*args, **kwargs)¶

Extensible and modular OpenGL widget developed using the Qt (PySide) Framework. This widget can be used in other PySide programs.

The widget by itself doesn’t draw anything, it delegates the writing task to external components called ‘renderers’ that expose the interface found in AbstractRenderer. Renderers are responsible for drawing objects in space and have access to their parent widget.

To attach a renderer to QChemlabWidget you can simply append it to the renderers attribute:

from chemlab.graphics import QChemlabWidget from chemlab.graphics.renderers import SphereRenderer widget = QChemlabWidget() widget.renderers.append(SphereRenderer(widget, ...))

You can also add other elements for the scene such as user interface elements, for example some text. This is done in a way similar to renderers:

from chemlab.graphics import QChemlabWidget from chemlab.graphics.uis import TextUI widget = QChemlabWidget() widget.uis.append(TextUI(widget, 200, 200, 'Hello, world!'))

Warning

At this point there is only one ui element available. PySide provides a lot of UI elements so there’s the possibility that UI elements will be converted into renderers.

QChemlabWidget has its own mouse gestures:

- Left Mouse Drag: Orbit the scene;

- Right Mouse Drag: Pan the scene;

- Wheel: Zoom the scene.

- renderers¶

Type : list of AbstractRenderer subclasses It is a list containing the active renderers. QChemlabWidget will call their draw method when appropriate.

- camera¶

Type : Camera The camera encapsulates our viewpoint on the world. That is where is our position and our orientation. You should use on the camera to rotate, move, or zoom the scene.

- light_dir¶

Type : np.ndarray(3, dtype=float) Default : np.arrray([0.0, 0.0, 1.0]) The light direction in camera space. Assume you are in the space looking at a certain point, your position is the origin. now imagine you have a lamp in your hand. light_dir is the direction this lamp is pointing. And if you move, jump, or rotate, the lamp will move with you.

Note

With the current lighting mode there isn’t a “light position”. The light is assumed to be infinitely distant and light rays are all parallel to the light direction.

- background_color¶

Type : tuple Default : (255, 255, 255, 255) white A 4-element (r, g, b, a) tuple that specity the background color. Values for r,g,b,a are in the range [0, 255]. You can use the colors contained in chemlab.graphics.colors.

- paintGL()¶

GL function called each time a frame is drawn

- toimage(width=None, height=None)¶

Return the current scene as a PIL Image.

Example

You can build your molecular viewer as usual and dump an image at any resolution supported by the video card (up to the memory limits):

v = QtViewer() # Add the renderers v.add_renderer(...) # Add post processing effects v.add_post_processing(...) # Move the camera v.widget.camera.autozoom(...) v.widget.camera.orbit_x(...) v.widget.camera.orbit_y(...) # Save the image image = v.widget.toimage(1024, 768) image.save("mol.png")

The Camera class¶

- class chemlab.graphics.camera.Camera¶

Our viewpoint on the 3D world. The Camera class can be used to access and modify from which point we’re seeing the scene.

It also handle the projection matrix (the matrix we apply to project 3d points onto our 2d screen).

- position¶

Type : np.ndarray(3, float) Default : np.array([0.0, 0.0, 5.0]) The position of the camera. You can modify this attribute to move the camera in various directions using the absoule x, y and z coordinates.

- a, b, c

Type : np.ndarray(3), np.ndarray(3), np.ndarray(3) dtype=float Default : a: np.ndarray([1.0, 0.0, 0.0]) b: np.ndarray([0.0, 1.0, 0.0]) c: np.ndarray([0.0, 0.0, -1.0]) Those three vectors represent the camera orientation. The a vector points to our right, the b points upwards and c in front of us.

By default the camera points in the negative z-axis direction.

- pivot¶

Type : np.ndarray(3, dtype=float) Default : np.array([0.0, 0.0, 0.0]) The point we will orbit around by using Camera.orbit_x() and Camera.orbit_y().

- matrix¶

Type : np.ndarray((4,4), dtype=float) Camera matrix, it contains the rotations and translations needed to transform the world according to the camera position. It is generated from the a,``b``,``c`` vectors.

- projection¶

Type : np.ndarray((4, 4),dtype=float) Projection matrix, generated from the projection parameters.

- z_near, z_far

Type : float, float Near and far clipping planes. For more info refer to: http://www.lighthouse3d.com/tutorials/view-frustum-culling/

- fov¶

Type : float field of view in degrees used to generate the projection matrix.

- aspectratio¶

Type : float Aspect ratio for the projection matrix, this should be adapted when the application window is resized.

- autozoom(points)¶

Fit the current view to the correct zoom level to display all points.

The camera viewing direction and rotation pivot match the geometric center of the points and the distance from that point is calculated in order for all points to be in the field of view. This is currently used to provide optimal visualization for molecules and systems

Parameters

- points: np.ndarray((N, 3))

- Array of points.

- mouse_rotate(dx, dy)¶

Convenience function to implement the mouse rotation by giving two displacements in the x and y directions.

- mouse_zoom(inc)¶

Convenience function to implement a zoom function.

This is achieved by moving Camera.position in the direction of the Camera.c vector.

- orbit_x(angle)¶

Same as orbit_y() but the axis of rotation is the Camera.b vector.

We rotate around the point like if we sit on the side of a salad spinner.

- orbit_y(angle)¶

Orbit around the point Camera.pivot by the angle angle expressed in radians. The axis of rotation is the camera “right” vector, Camera.a.

In practice, we move around a point like if we were on a Ferris wheel.

- unproject(x, y, z=-1.0)¶

Receive x and y as screen coordinates and returns a point in world coordinates.

This function comes in handy each time we have to convert a 2d mouse click to a 3d point in our space.

Parameters

- x: float in the interval [-1.0, 1.0]

- Horizontal coordinate, -1.0 is leftmost, 1.0 is rightmost.

- y: float in the interval [1.0, -1.0]

- Vertical coordinate, -1.0 is down, 1.0 is up.

- z: float in the interval [1.0, -1.0]

- Depth, -1.0 is the near plane, that is exactly behind our screen, 1.0 is the far clipping plane.

Return type: np.ndarray(3,dtype=float) Returns: The point in 3d coordinates (world coordinates).